The 2026 Agent Browsing Security Baseline: 12 Controls to Stop Prompt Injection and Data Exfiltration

Agentic systems now read the web, click buttons, and move data between SaaS apps. That power comes with risk: prompt injection, agent hijacking, and silent data exfiltration. If you plan to scale agents in 2026, you need a clear, vendor‑agnostic baseline—especially for browsing agents.

Why now? Microsoft introduced an enterprise control plane for agents (Agent 365), signaling mainstream adoption of agent registries, access controls, and telemetry. Salesforce’s Agentforce 360 takes a similar path, pairing orchestration with governance. Meanwhile, the U.S. AI Safety Institute (NIST/US AISI) published guidance highlighting agent hijacking via indirect prompt injection in realistic environments. Together, this points to a simple conclusion: before you scale, harden. Wired on Agent 365; Microsoft blog; Salesforce Agentforce 360; US AISI/NIST guidance.

The 12‑Control Baseline for Browsing Agents

Use these controls to reduce attack surface without killing velocity. Each control includes implementation notes you can apply to OpenAI Responses + Computer Use, Chromium automation, or vendor platforms.

1) Define trust boundaries for content

Agents must treat web pages, emails, PDFs, and user‑uploaded docs as untrusted. Tag inputs by trust level and source, then condition policies on those tags (e.g., restrict tool use when content is untrusted). Microsoft’s Prompt Shields/Spotlighting shows one design pattern to highlight untrusted spans and blunt indirect injection. Azure Prompt Shields.

2) Enforce allow‑lists for actions and destinations

Block high‑risk actions by default (publishing, purchasing, emailing external recipients). Maintain an allow‑list of domains and tools your agent can touch; prompt injection often succeeds by quietly pivoting to malicious endpoints. Keep reviewable policy files in Git and ship a CI check that fails on policy regressions.

3) Least‑privilege credentials with duty segregation

Issue scoped, short‑lived tokens per task. Separate roles: a “reader” agent cannot trigger purchases; a “purchaser” agent requires a human approval step and can’t read customer PII. This reduces blast radius and mitigates “second‑order” attacks where a low‑privilege agent coaxes a high‑privilege peer to exfiltrate data. Second‑order injection overview.

4) Browser isolation and secret hygiene

Run the agent’s browser in a container/VM with a fresh profile per job. Disable password managers, third‑party extensions, and clipboard sync. Inject secrets only via ephemeral environment variables and revoke on job end. If the vendor platform exposes a managed browser, verify it supports per‑run isolation and policyed downloads.

5) Sanitization layer for untrusted content

Strip or neutralize embedded instructions before they reach the model. Research defenses like DataFilter remove adversarial instructions while preserving useful content—plug‑and‑play if you can’t fine‑tune base models. DataFilter (2025).

6) Multi‑agent validation before execution

Route risky intents (e.g., “send email to all customers”) through a validator agent that checks for policy breaches and suspicious cues (secret requests, wallet addresses, drive‑by downloads). Multi‑agent pipelines have shown strong reductions in prompt‑injection success in lab settings—use them to detect and de‑escalate. Multi‑agent defense pipeline.

7) Human‑in‑the‑loop for irreversible actions

Require explicit human approval for publishing, spending, mass messaging, deleting, or altering access controls. Don’t bury it in the UI—make approval a hard gate with clear diff previews of what will happen.

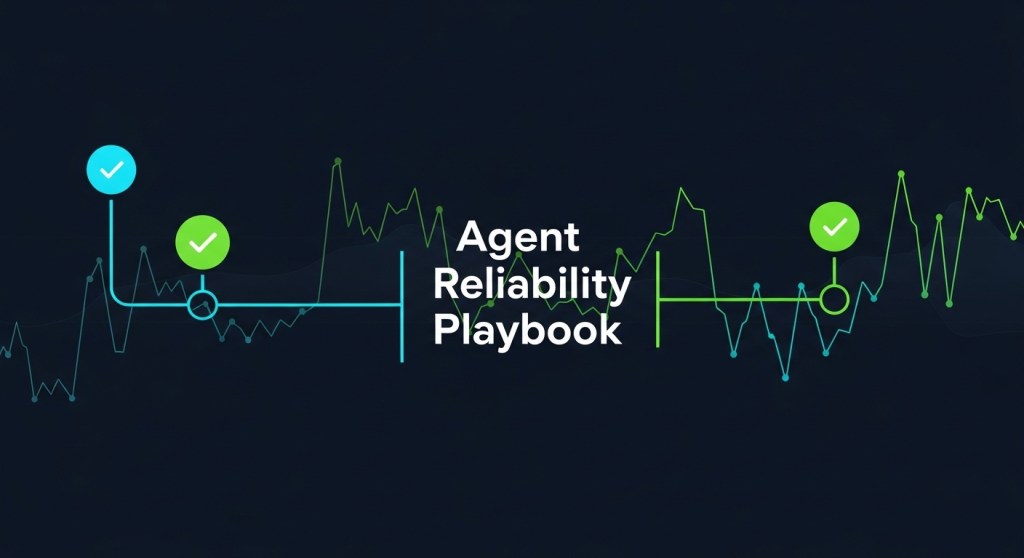

8) Telemetry with traces, not just logs

Instrument every tool call and browser step as spans with inputs/outputs, policy decisions, and screenshots (scrubbed). Use OpenTelemetry conventions so you can pipe signals into your existing SIEM and create alerts on patterns like “untrusted → email_all@company.com.” For the how‑to, see our reliability playbook. Agent reliability with OpenTelemetry.

9) DLP and egress controls

Scan agent outputs and uploads for keys, PII, customer lists, and source code. Block exfil channels (personal email, paste sites, ghost S3 buckets). Keep clean “egress allow‑lists” for file uploads and email domains.

10) Red‑team with agent‑specific frameworks

Adopt repeatable tests for agent hijacking. US AISI recommends environments like AgentDojo; research work such as AgentXploit automates black‑box fuzzing for indirect prompt injection. Make red‑teaming part of your release gate. US AISI/NIST; AgentXploit.

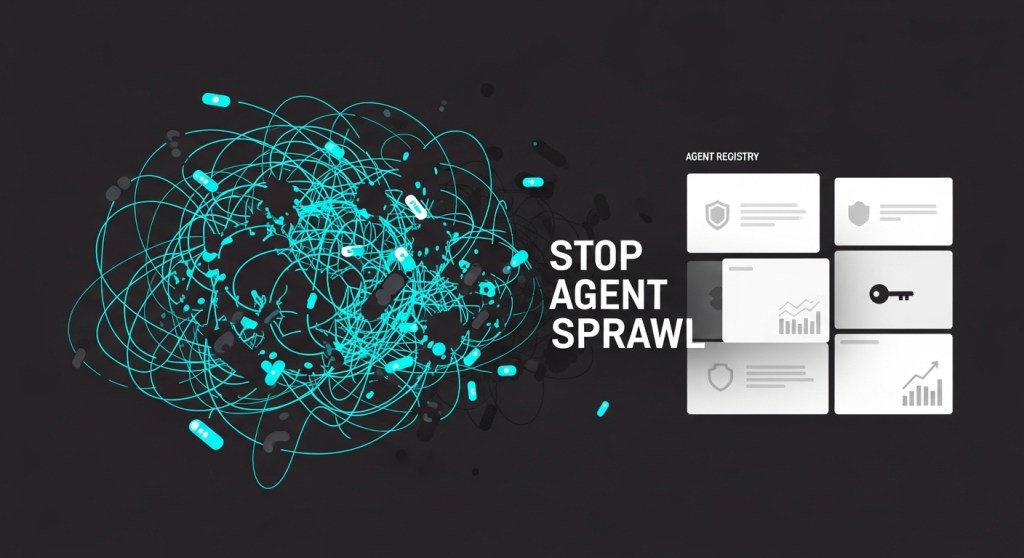

11) Register every agent and centralize policy

Maintain a single registry with owner, purpose, scopes, and data access. If you’re in the Microsoft stack, Agent 365 provides an admin control plane; otherwise, enforce a DIY registry with tags and signed policy bundles. For a blueprint, use our registry and access model guide. Stop Agent Sprawl; Wired on Agent 365.

12) Circuit breakers and safe fallbacks

Ship kill‑switches per agent, per capability, and globally. Add rate and budget limiters. When policy triggers, degrade the agent into a read‑only analyst with guidance for the human user.

14‑Day Rollout Plan

- Inventory & risk map (Day 1–2): List agents, tasks, tools, and data touched. Tag untrusted inputs and high‑risk actions.

- Policy + allow‑lists (Day 3–4): Create domain and action allow‑lists. Gate irreversible actions behind approvals.

- Isolation & secrets (Day 5–6): Containerize the browser. Rotate to short‑lived credentials per job.

- Sanitization + validation (Day 7–8): Add a sanitization layer (e.g., DataFilter) and a validator agent before execution.

- Telemetry (Day 9–10): Instrument traces for tool calls and browser steps; define alerts for risky patterns. Telemetry guide.

- Red‑team (Day 11–12): Run agent hijacking scenarios (AgentDojo patterns). Capture attack success rates pre/post controls.

- Governance (Day 13–14): Register all agents, owners, and scopes. Wire approvals and kill‑switches into on‑call runbooks. Registry baseline.

What “good” looks like (acceptance criteria)

- ASR drop: Prompt‑injection attack‑success rate reduced by ≥80% in your red‑team suite after Controls 1–7.

- Zero‑trust execution: Untrusted content always forces read‑only mode unless a validator + human approval passes.

- Traceability: 100% of agent steps are traceable with inputs/outputs, policy decisions, and redactions.

- Containment: Any single agent compromise cannot email customers, publish content, or move funds without human sign‑off.

Related playbooks on HireNinja

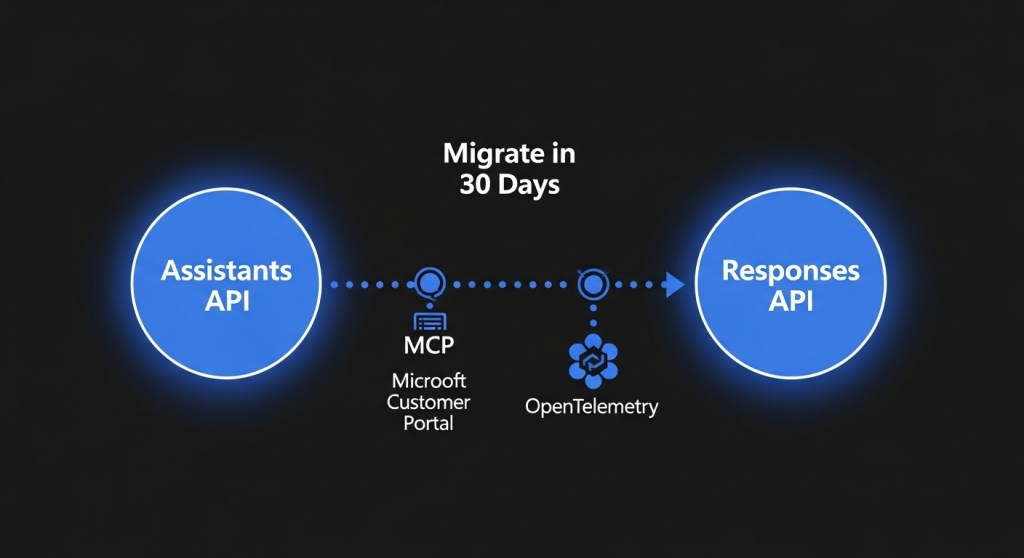

- Migrate from Assistants API to Responses API (for Computer Use + tracing).

- Agent Evaluation & Red‑Teaming.

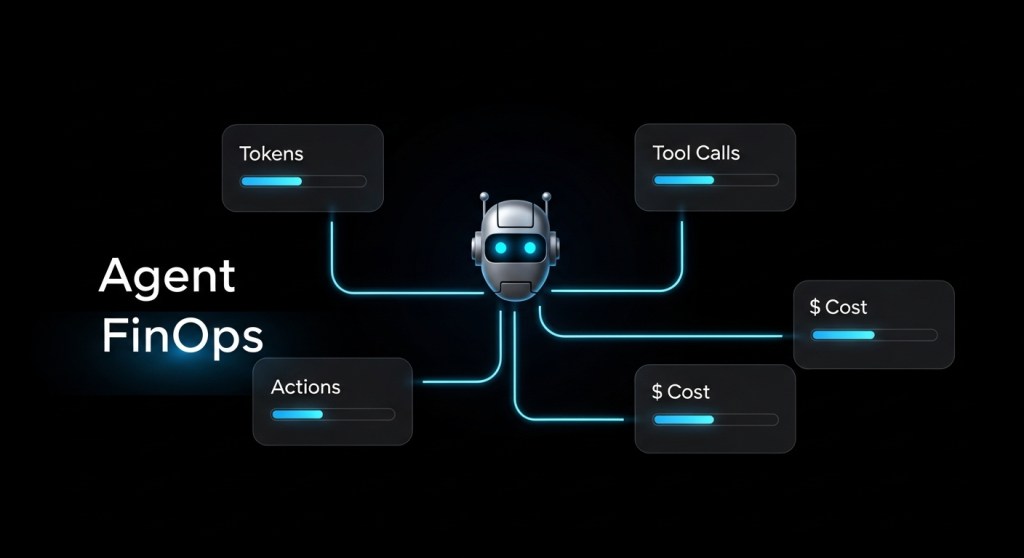

- Agent FinOps.

- Agentic Support Desk in 30 Days.

Notes on vendors and research

Control planes like Agent 365 and Agentforce 360 reflect where enterprises are headed—registries, access control, and observability by default. But this does not eliminate injection risk; you still need input sanitization, validation, and policyed execution. See also: OpenAI’s Assistants → Responses migration and platform guidance on Computer Use security constraints. OpenAI docs; deprecation timeline; Zendesk’s claim.

Call to action: Want this baseline shipped in two weeks with telemetry, approvals, and runbooks? Talk to HireNinja. We’ll implement controls 1–12, wire traces to your SIEM, and certify agents with a red‑team pass. Subscribe or contact us to get started.