- Scan competitors for fresh signals (Agent 365, A2A, ACP, Visa/Stripe moves).

- Pick an outcome: ship a secure agent registry + observability in 14 days.

- Design for interop (A2A/MCP) and payments readiness (ACP/SPT).

- Instrument with OpenTelemetry GenAI spans/metrics from day one.

- Prove ROI with one production‑adjacent use case and a rollback plan.

Why this matters now

Microsoft announced Agent 365—a control plane to inventory, secure, and govern AI agents across your org—now available via Frontier early access. Coverage from Wired and Microsoft’s own blogs confirm the push toward an enterprise agent registry, access control, and telemetry built into Microsoft 365 and Entra. In parallel, Google’s A2A protocol is gaining traction (Microsoft is adding support), and Stripe’s Agentic Commerce Protocol (ACP) adds a standardized way for agents to pay merchants using a scoped SharedPaymentToken (SPT). Visa is piloting agent‑led commerce as well. Together, these moves mean agent fleets are moving from proofs‑of‑concept to audited production workflows in 2026.

What you’ll have in 14 days

- Agent registry + identity: A single inventory in Agent 365 with Entra Agent ID for at least 3 priority agents (internal or third‑party).

- Guardrails: Least‑privilege scopes, approval flows, and quarantine for shadow agents.

- Interop: One A2A handshake to a non‑Microsoft agent (or MCP tool server) to prove cross‑vendor workflows.

- Payments readiness: ACP/SPT sandbox flow documented and reviewed with compliance (no live cards).

- Observability: OpenTelemetry GenAI spans/metrics tracing your pilot end‑to‑end.

Prereqs (1–2 hours)

- Request Frontier access for Agent 365 (Microsoft early access) and enable Agent 365 in your tenant.

- Nominate a pilot team: IT admin, security, a product owner, and one developer.

- Pick one production‑adjacent workflow (e.g., weekly pricing updates, catalog enrichment, or level‑1 support triage).

The 14‑day plan

Days 1–3: Stand up the control plane

- Inventory: In Microsoft 365 Admin Center, open Agents → All Agents. Register known internal agents (Copilot Studio or third‑party) and issue Entra Agent IDs. Add tags for owner, purpose, data access, and environments.

- Guardrails: Define per‑agent policies: allowed resources, data boundaries, execution windows, and human‑in‑the‑loop checkpoints. Turn on quarantine for unregistered/shadow agents.

- Telemetry: Configure OpenTelemetry collectors; emit GenAI semantic spans and metrics from your selected agent framework (e.g., OpenAI client spans and agent spans).

Days 4–6: Prove interop

- A2A handshake: Choose a partner agent or internal service that exposes an A2A endpoint and Agent Card. Perform a simple task lifecycle across vendors (e.g., request a draft response from an external research agent and route it back to Teams). See Google’s A2A overview and Microsoft’s alignment news.

- MCP/tool access: If your agent needs internal tools (e.g., product DB, email), expose them via MCP or approved connectors, and scope permissions to the pilot dataset only.

- Runlayer‑style checks: Add prompt‑injection and egress rules learned from recent agent security research and products.

Days 7–10: Payments readiness (optional but recommended)

- ACP sandbox: Implement a basic ACP interface (REST or MCP server) per Stripe’s spec (private preview). Do not accept real payments.

- Create SPT: In a test agent, issue a SharedPaymentToken with tight usage limits (currency, max amount, TTL). Use Stripe’s test helpers to simulate a PaymentIntent with the SPT and observe related webhooks.

- Compliance dry‑run: Document AP/PCI touchpoints, revocation paths, and event logging. Reference Visa’s ongoing agentic commerce pilots to align expectations with finance and risk teams.

Days 11–14: Reliability, SLOs, and cutover

- Evals & SLOs: Define pilot SLOs (success rate, latency, re‑ask rate, human handoff rate) and add runbooks/alerts. Use OTel spans to attribute lapses to model errors vs. tool failures.

- Game‑day: Simulate failure modes Microsoft researchers have highlighted (marketplace/reputation manipulation, adversarial data). Verify rollback and quarantine paths.

- Stakeholder demo: Show the live registry, guardrails, A2A handoff, ACP sandbox trace, and a dashboard with before/after metrics.

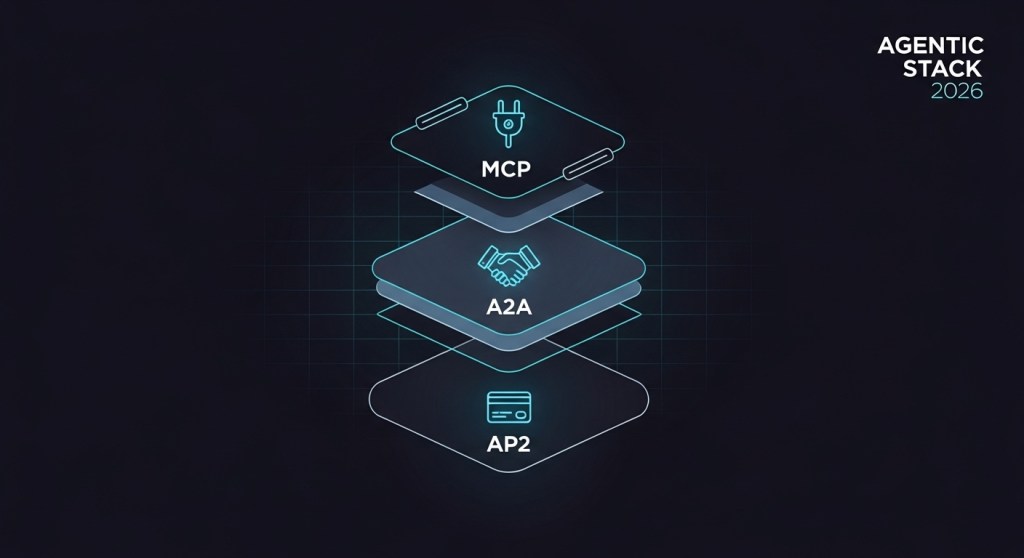

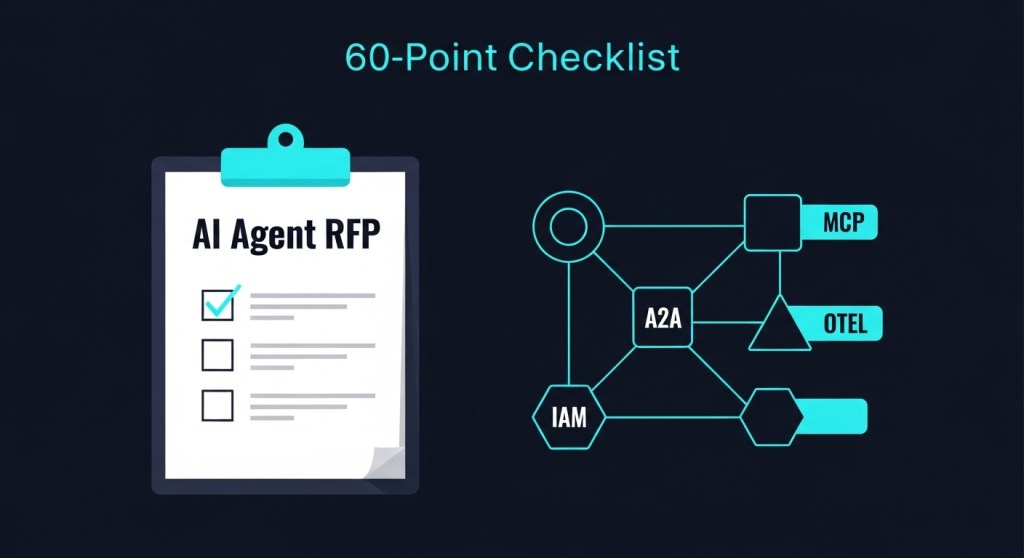

Architecture at a glance

Control plane: Agent 365 (registry, access control, visualization, security). Interop: A2A for agent‑to‑agent tasks; MCP or connectors for tool access. Payments: ACP/SPT sandbox only. Observability: OpenTelemetry GenAI spans/metrics. Risk: Quarantine, least privilege, HIL gates, and revocation hooks.

Metrics that matter (and how to instrument)

- gen_ai.client.token.usage (cost/efficiency).

- Agent task success (% tasks completed without human rescue).

- Time‑to‑resolve (minutes) and handoff rate (%).

- Policy violations (blocked actions, data egress events).

- A2A success (cross‑agent task completion rate).

Emit OTel spans for each request/step; tag with gen_ai.provider.name, gen_ai.operation.name, model, tool, and gen_ai.data_source.id. Add business attributes (order_id, ticket_id) for ROI attribution.

Common pitfalls (and how to avoid them)

- Shadow agents: Turn on quarantine and require Entra Agent ID for discovery.

- Over‑permissioned tools: Start with read‑only; add write scopes post‑evaluation.

- Inter‑agent trust: Prefer signed Agent Cards and explicit capability discovery; log all remote invocations.

- Payment sprawl: Use SPTs with tight limits; never share raw credentials across agents.

- Reputation games: Don’t rely on star ratings alone—use telemetry, evals, and allow‑lists.

Realistic next steps

After the pilot, expand to a second workflow and bring ACP out of sandbox only after a formal risk review. Layer in cost controls and architectural patterns from our prior posts below.

Related guides on HireNinja

- Ship an AI Agent Registry + IAM in 7 Days

- Agent Reliability Engineering in 30 Days

- Agent‑Led Payments: AP2 + A2A + MCP

- Agent Attribution for 2026

- The 2026 Agentic Interop Stack

- Agent Platform RFP Checklist (2026)

Sources and further reading

- Microsoft Agent 365 announcement and docs (early access)

- A2A protocol overview and Microsoft alignment (Google Developers, TechCrunch)

- ACP/SPT docs and newsroom updates (Stripe; Salesforce collab)

- Visa intelligent commerce pilots (Reuters/AP)

- OpenTelemetry GenAI semantic conventions

- Agent security research and real‑world failure modes

Call to action: Want a done‑with‑you Agent 365 pilot? Book a 30‑minute scoping call and we’ll help you ship this 14‑day plan safely—governed, observable, and ROI‑focused.