Agent platforms are maturing fast (Microsoft’s Agent 365, OpenAI’s AgentKit, and cross‑vendor A2A interop are now real). That’s great for capability—but it also makes costs unpredictable. This playbook shows how to measure, attribute, and reduce AI agent spend in 30 days using OpenTelemetry, simple model‑routing, and budget guardrails.

Who this is for

- Startup founders and product leaders who need agent ROI by the next board meeting.

- Ops/RevOps teams who must explain “where the tokens went.”

- E‑commerce operators who want lower cost‑per‑resolution before peak season.

Outcomes you can expect

- Clear cost attribution per agent, workflow, tenant, and outcome.

- Targeted 25–40% spend reduction from caching, routing, and failure control.

- Budget guardrails and alerts that stop overruns without breaking CX.

The 3 metrics that matter

- Cost per Resolution (CPR): dollars per successful outcome (ticket solved, order updated, refund created).

CPR = (LLM/API fees + tool calls + supervision labor + infra) / # successful outcomes - Cost per Attempt (CPA): dollars per agent attempt, successful or not. Useful for spotting waste from retries/loops.

- Success Rate (SR): successful outcomes / total attempts. Improves when you fix failure modes, not when you just spend more.

Week 1 — Instrument everything with OpenTelemetry

Adopt the Generative AI semantic conventions so every agent call emits standardized telemetry. At minimum, capture model name, input/output tokens, cache hits, tool calls, latency, and outcome labels.

- Implement OpenTelemetry gen‑ai metrics for token usage and time-per-token.

- Track cache economics using proposed attributes for cache read/write tokens (see the community discussion here).

- Emit span attributes for

customer_id,agent_id,workflow,intent,outcome(success, escalation, retry), andcost_usdper call.

Need a quick primer on observability for agents? Set up tracing alongside metrics; an eBPF‑style boundary tracing approach (e.g., ideas from AgentSight) helps correlate prompts, tool calls, and system effects without invasive code changes.

Related guides on this blog:

Week 2 — Build a cost ledger and CPR dashboard

Create a simple cost map so each token, request, and tool call translates to dollars in your data warehouse.

- Cost dictionary: table of

model,price_input_per_1k,price_output_per_1k,cache_write_factor,cache_read_factor,tool_fixed_cost. Update weekly. - Attribution join: join OTel spans to the dictionary to compute

cost_usdper span; aggregate byagent_id,workflow,customer_id,outcome. - Dashboard: CPR, CPA, SR by workflow; top 10 costly prompts; retry distribution; cache hit rate; tool‑call outliers.

// Example CPR query (pseudo‑SQL)

SELECT workflow,

SUM(cost_usd) / NULLIF(SUM(CASE WHEN outcome='success' THEN 1 ELSE 0 END),0) AS cpr,

AVG(cache_hit_rate) AS cache_hit,

AVG(retries) AS avg_retries

FROM agent_spans_hourly

WHERE ts >= now() - interval '30 days'

GROUP BY 1

ORDER BY cpr DESC;

Tip: Track a “frontier cost‑of‑pass” baseline using ideas from the research community to compare accuracy‑vs‑cost across models and strategies; see the economic framing in Cost‑of‑Pass.

Week 3 — Cut waste before you optimize

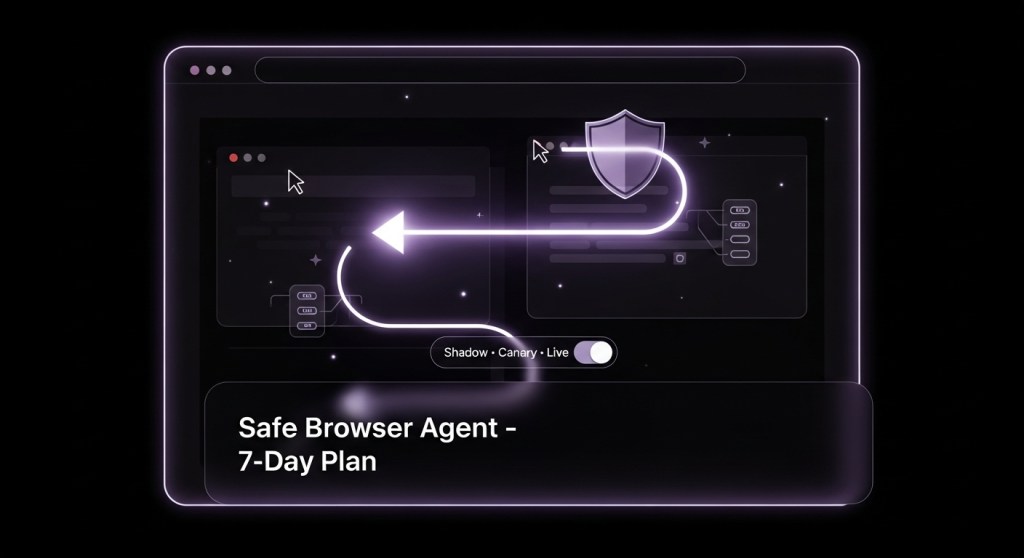

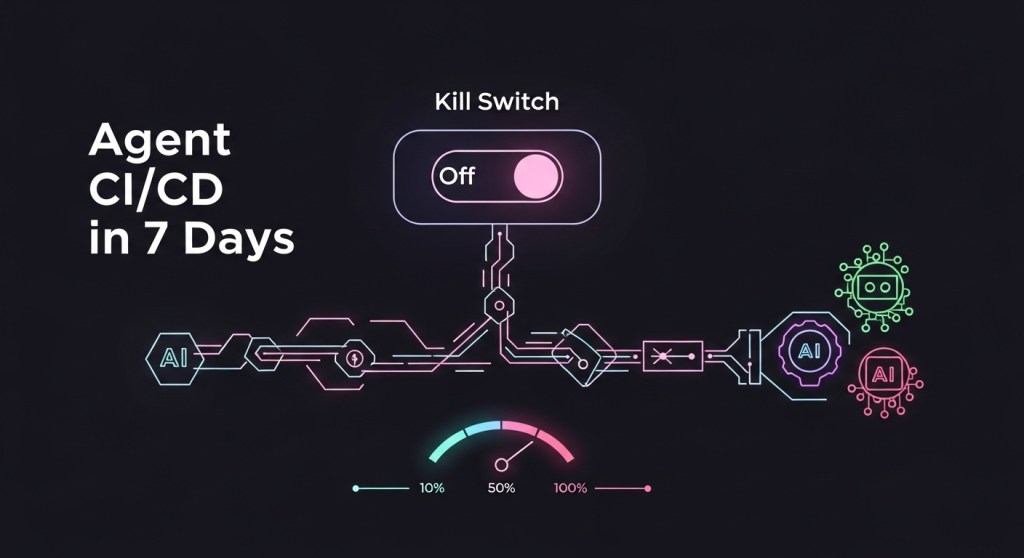

- Kill failure loops: add timeouts and retry caps. If SR < 85% for a workflow, gate deploys via canaries (see our CI/CD guide).

- Cache aggressively where quality holds: enable prompt prefix caching for long system prompts, embeddings caches for repeated lookups, and deterministic tool schemas to maximize hits.

- Trim prompts: shorten instructions, compress memories, and pin retrieval windows. Every 10% token reduction compounds across traffic.

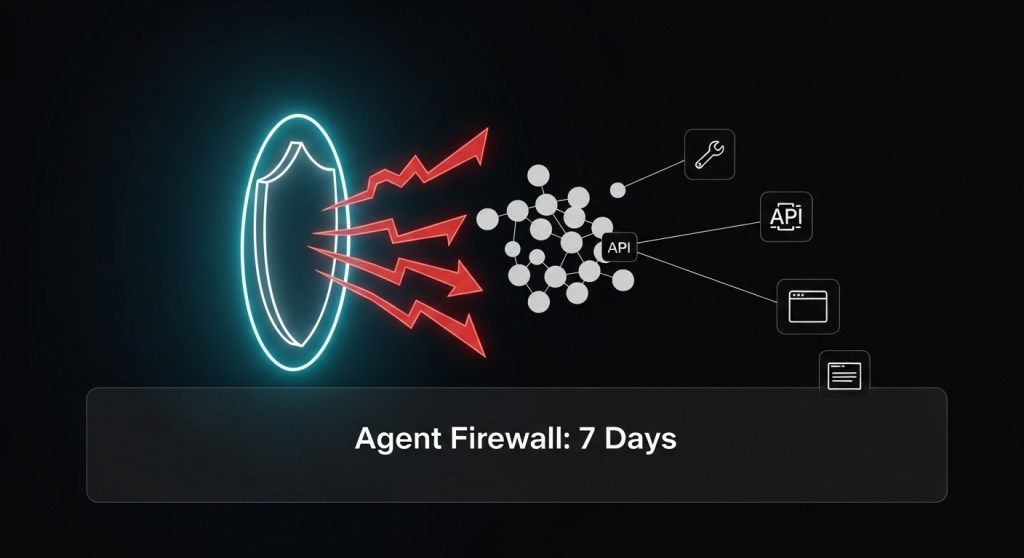

- Guard tools: block cost‑explosive tool calls with an Agent Firewall and OPA policies (max items per call, max pages fetched, etc.).

Week 4 — Route smartly and enforce budgets

- Tiered model routing: use a small/fast model for easy cases, escalate to larger models only when confidence is low or impact is high. Recent work on cost‑aware orchestration shows 20–30% savings without hurting reliability.

- Outcome‑aware retries: if a retry is cheaper than human escalation and keeps CPR below target, retry once with a different strategy (e.g., higher temperature + stricter tool plan).

- Budgets and alerts: define daily spend caps per

agent_id/tenant. When approaching thresholds, auto‑switch to low‑cost routes or require human approval.

// Pseudo‑policy for routing (YAML)

route:

- if: confidence >= 0.85 and risk == 'low'

use: small_model

- if: confidence < 0.85 and impact == 'high'

use: large_reasoning_model

- else:

use: base_model

budgets:

default_daily_usd: 300

actions_on_80pct: ['switch_to_small_model','require_approval']

Example: Support L1 deflection CPR drops from $3.10 → $1.85

What changed:

- Prompt trimmed 28%; cache hit rate from 0% → 42% for long instructions.

- Added small→large routing with one guarded retry.

- Blocked costly web‑browse side quests with the safe browser agent patterns.

Result (30 days): CPA −37%, SR +9 pts, CPR −40%, while CSAT held steady.

Policy and governance pointers (don’t skip)

Budget guardrails should sit inside your broader governance program. Use the NIST AI RMF’s Manage/Measure functions for risk controls and link to EU AI Act obligations if you operate in the EU.

- NIST AI RMF and Playbook for operational controls (framework, playbook).

- EU AI Act timeline: GPAI obligations start before full enforcement; plan ahead (Reuters summary).

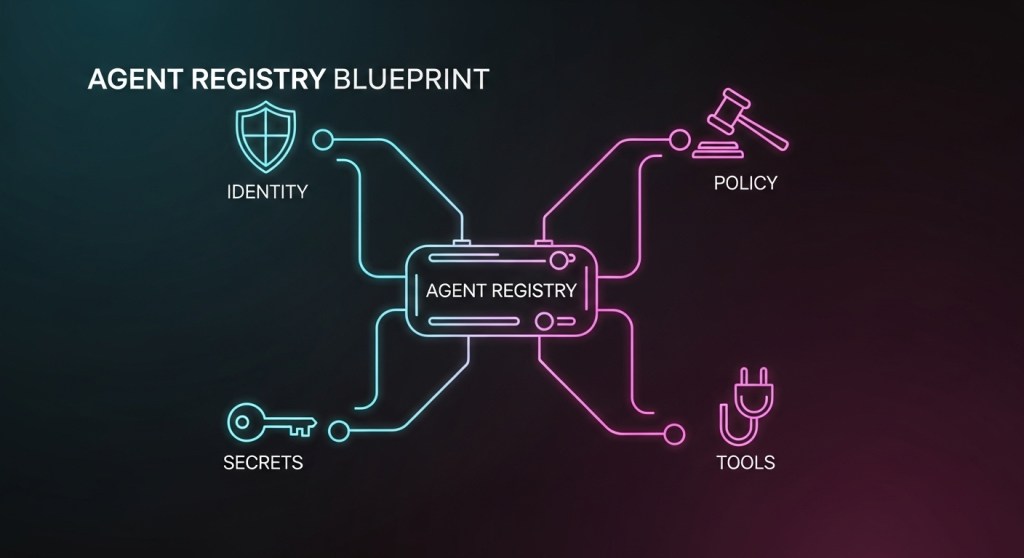

- For a registry/control‑plane approach, see our Agent Control Plane and Agent Registry posts.

RFP context: Where vendors fit

As you formalize budgets, expect platform features to help: Microsoft’s Agent 365 emphasizes registries and access controls; OpenAI’s AgentKit focuses on building and evaluating agents; and A2A seeks cross‑platform interop. Use these, but keep your cost ledger and routing logic vendor‑neutral.

Your 30‑day checklist

- Day 1–3: Wire up OTel gen‑ai metrics; start emitting token counts, cache hits, model names, outcomes.

- Day 4–7: Build the cost dictionary and attribution jobs; launch CPR/CPA/SR dashboard.

- Day 8–14: Kill loops, cap retries, trim prompts, turn on caching; deploy an agent firewall.

- Day 15–21: Add small→large model routing with a single guarded retry and human escalation thresholds.

- Day 22–30: Set daily budgets and alerts; review the worst 10 workflows; refactor for CPR targets; update RFP asks.

FAQ

Will this hurt quality? Not if you fix failure modes first and escalate thoughtfully. Keep an eye on SR and CSAT, not just cost curves.

What about compliance? Tie budget guardrails and audit logs into your governance baseline. Start with our 48‑hour governance checklist.

Bottom line

Agent capabilities are exploding across vendors. The teams that win won’t just build faster—they’ll manage cost per outcome. Instrument with OpenTelemetry, build a cost ledger, eliminate waste, route smartly, and enforce budgets. Do this for 30 days and you’ll have durable, compounding savings—and a story your CFO will love.

Call to action: Want the starter dashboards, YAML policies, and SQL templates mentioned here? Subscribe to HireNinja and reply “FinOps kit”—we’ll send the templates and a 30‑minute walkthrough.