What you’ll get: a fast, founder-friendly playbook to publish your AI agent where buyers actually look in 2025—MCP registries, enterprise marketplaces, NLWeb endpoints—and a 21-day plan to launch with guardrails, attribution, and ROI.

Why distribution is the new moat for AI agents

In 2025, agent platforms went from experiments to ecosystems. OpenAI shipped AgentKit with an enterprise Connector Registry, Microsoft added MCP support in Windows, and clouds and suites rolled out dedicated agent stores. If your agent isn’t listed where admins shop, or discoverable via NLWeb/MCP, you’re invisible at the exact moment budgets are shifting to automation. OpenAI AgentKit, Connector Registry, Windows MCP support, Anthropic MCP, and Microsoft’s NLWeb together define the new distribution surface.

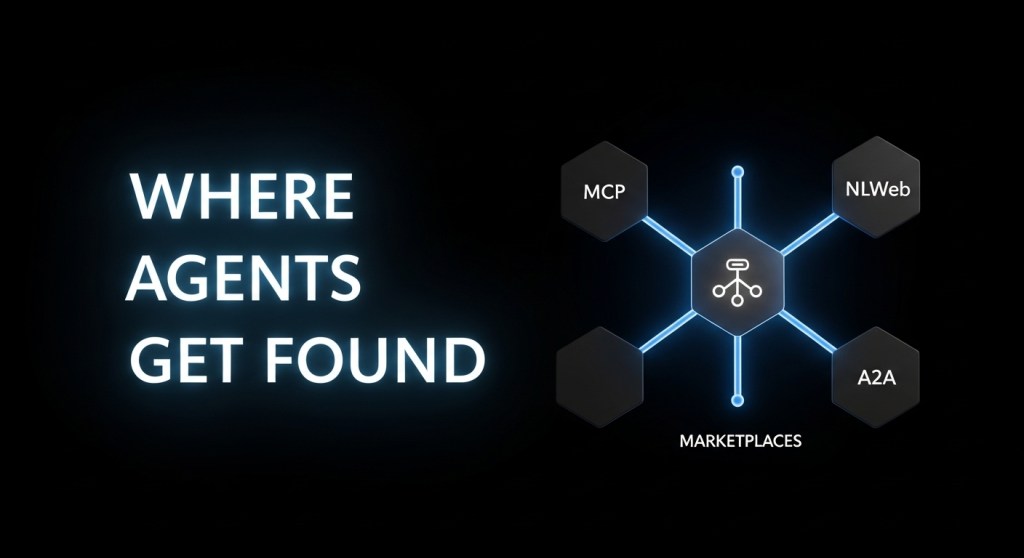

The 2025 agent distribution map (what matters and why)

- MCP registries and OS surfaces – Microsoft is bringing the Model Context Protocol (MCP) to Windows with a gated registry and consent prompts, positioning MCP as the “USB‑C of AI apps.” Listing an MCP server makes your agent discoverable across tools that speak MCP. The Verge, Anthropic.

- Your website as an agent endpoint (NLWeb) – NLWeb turns your site into a conversational API and—crucially—every NLWeb instance is also an MCP server. That means one implementation helps both humans and agents find your catalog or docs. Microsoft, NLWeb, GitHub.

- Enterprise marketplaces – Buyers want vetted listings they can procure on existing POs:

- App‑suite ecosystems – Salesforce’s Agentforce 360 and Slack integration surface agents inside daily workflows; Notion’s agent automates across pages and databases. Salesforce/Slack, Notion.

- Browser/assistant agents – Google’s Mariner and Amazon’s Nova Act bring agent actions to the web and voice. Optimizing for these means clean markup, stable flows, and safe purchase protocols. Mariner, Nova Act.

Before you list: hard truths on readiness

- Security & maintainability – Early research flags novel vulnerabilities in community MCP servers; Windows’ MCP rollout reflects tighter gating for safety. Bake in permissions, logging, and rate limits up front. arXiv, The Verge.

- Value clarity – Gartner expects >40% of agentic projects to be scrapped by 2027 due to cost and unclear ROI. Your listing must articulate the job-to-be-done and prove outcomes within weeks. Reuters/Gartner.

- Safe purchases – If your agent touches checkout, support agent‑driven purchases via AP2 to ensure traceability and dispute handling. TechCrunch on AP2.

How to make your agent discoverable (and buyable)

- Ship an MCP server that exposes stable tools and telemetry. If you run on Windows, prepare for registry policies and consent prompts. Anthropic MCP, Windows MCP.

- Add NLWeb to your site so humans and agents can query your catalog in natural language. NLWeb instances double as MCP servers; you get discoverability for free. Microsoft, NLWeb.

- Publish in enterprise marketplaces where your buyers already procure:

- Integrate with platform registries such as OpenAI’s AgentKit Connector Registry to govern data access across ChatGPT and API orgs. OpenAI, TechCrunch.

- Optimize for browser agents (Mariner, Nova Act): use clean semantic HTML, avoid fragile flows, and implement AP2 for checkout. Mariner, Nova Act, AP2.

A 21‑day go‑to‑market plan (templates + internal resources)

Assumes you already have an agent that delivers a clear job-to-be-done (returns automation, lead capture, order status, etc.).

- Days 1–3: Prep

- Define 1–2 golden workflows and SLOs (e.g., “90% of return requests auto‑approved in < 30s”). Set evals and error budgets. See: AgentOps in 2025.

- Instrument agent attribution: mandates/webhooks → CRM/BI. See: Agent Attribution Playbook.

- Days 4–7: Ship the surfaces

- Publish an MCP server with scoped tools and OpenTelemetry traces.

- Add NLWeb to your website; expose product or docs search in natural language.

- Harden identity and anti‑spoofing (mandates, signed calls). See: Stop Agent Spoofing.

- Days 8–14: List where buyers are

- Days 15–21: Prove value

- Run a closed beta with 5–10 design partners. Publish SLOs and an incident playbook. AgentOps.

- Enable agent checkout using AP2 and recordable mandates. Agent‑Ready Store, AP2.

- Ship an agent SEO update: schema, NLWeb, MCP discovery. AEO 2025.

Positioning your listing for admins

- Lead with the workflow: “Automates order-status and returns across Zendesk + Shopify in <30 seconds.”

- Proof in numbers: pre‑commit to two ROI KPIs (AHT reduction, CSAT lift, % automated) and a time‑to‑value under 21 days.

- Security page: permissions model, data residency, audit trails, jailbreak defenses. Link your incident response policy.

- Procurement ready: pricing tiers (pilot, production), legal artifacts, SOC2/ISO status, and AP2 for any purchase flow.

Example: a DTC e‑commerce brand

• Add NLWeb to expose conversational product search. • Publish an MCP server for order lookup, returns, and warranty. • List a managed deployment in AWS Marketplace and an A2A Agent Card on Google Cloud. • Connect the OpenAI Connector Registry for governed access across ChatGPT and your app. • Optimize your storefront for Mariner/Nova Act with semantic HTML and AP2 for agent checkout. • Track mandates and revenue in your CRM using our attribution playbook and AgentOps SLOs.

KPIs to watch

- Discovery: registry impressions, marketplace page views, add‑to‑workspace/install rate.

- Activation: first successful tool call, time to first closed loop (mandate → action → confirmation).

- Reliability: success rate, median time to resolve, incident frequency (with public postmortems).

- Revenue: mandates to paid conversions, AP2 purchases, LTV/CAC by channel.

Common pitfalls (and how to avoid them)

- “Agent washing” – Don’t list a chatbot as an agent. Show real tool use and guardrails; Gartner sees many projects failing for lack of value clarity. Reuters/Gartner.

- Weak identity and auditing – Use signed mandates, scoped tokens, and OpenTelemetry traces. See our anti‑spoofing playbook.

- Fragile browser flows – Mariner and Nova Act punish brittle DOM assumptions; prefer stable selectors and semantic HTML. Mariner, Nova Act.

Wrap‑up

Distribution for agents is finally getting boring—in a good way. Publish an MCP server, add NLWeb, and meet buyers in their marketplaces and suites. Tie everything back to SLOs, attribution, and AP2 so your CFO sees mandated revenue, not magic.

CTA: Want help shipping this in 21 days? Subscribe for more playbooks or use our Zendesk agent quick‑start—then talk to HireNinja about an agent GTM sprint.