Browser AI Is the New Homepage: Firefox’s AI Toggle + Gemini 3 Flash Default — What Founders Must Ship This Week

Published: December 18, 2025

Executive checklist (do this first)

- Turn your most important pages into citation‑friendly, AI‑quotable sources (clear headings, schema, evidence).

- Instrument analytics to capture AI‑surface referrals (Gemini, AI Mode, AI browsers) and track conversions.

- Ship a browser & extension policy before enabling new AI features in Chrome/Firefox/Arc/Perplexity.

- Create an agent‑readable docs hub (APIs/feeds) so assistants can quote you accurately.

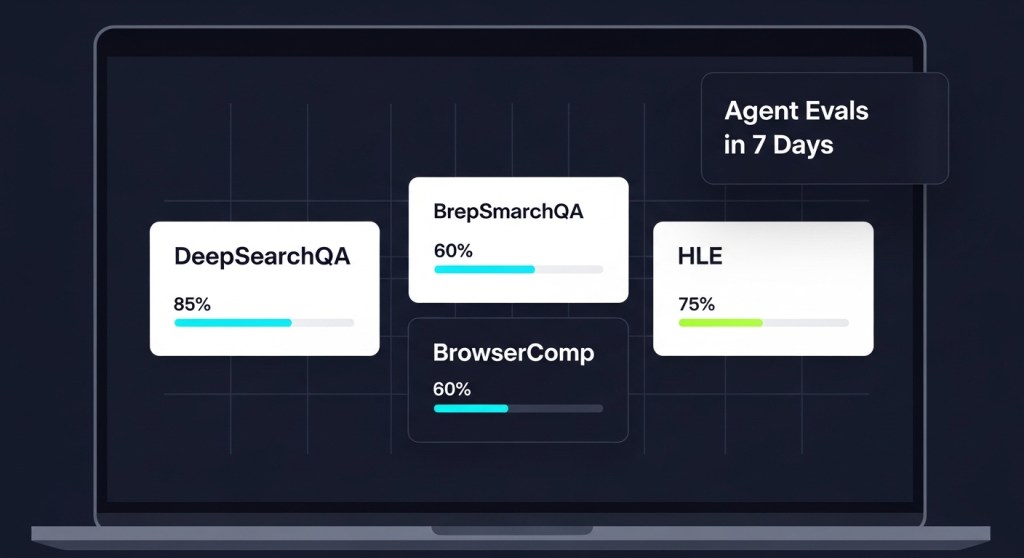

- Map a 7‑day rollout below; assign owners today.

What just changed (and why it matters)

On December 17, 2025, Mozilla’s new CEO said AI is coming to Firefox—but as an opt‑in. The same day, Google made Gemini 3 Flash the default model in the Gemini app. Translation: the browser—your user’s first stop on the internet—is becoming an AI surface by default, with AI summaries, actions, and agentic flows sitting between you and your customer.

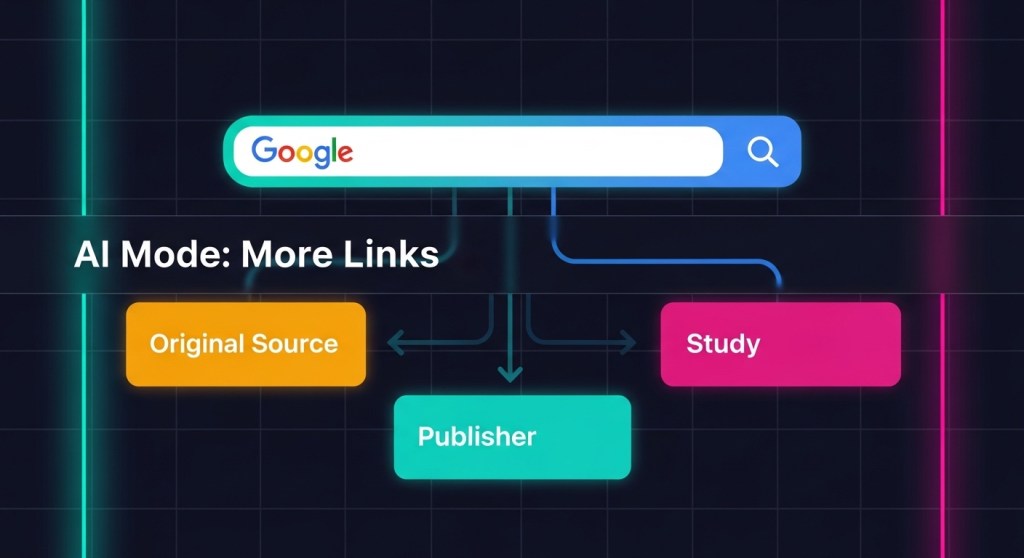

We’ve already covered how Google’s AI Mode plans to link out more. Combine that with Firefox’s opt‑in AI and the rapid rise of AI‑first browsers (Arc, Perplexity, and others), and you get a simple directive for founders and growth teams: optimize for the browser’s AI layer or risk losing visibility and conversions in 2026.

How browser AI changes growth, SEO, and CX

- Distribution shifts to answers, not blue links. AI summaries will cite and deep‑link when your page is structured, source‑backed, and fast. If you’re vague or slow, you won’t be quoted.

- Intent collapses inside the browser. Queries like “compare the best headless CMS for a B2B SaaS” yield an AI comparison table. Be the page that populates that table.

- Agentic actions will follow. Think, “book a demo,” “start a return,” or “generate a spec doc” without leaving the tab. You’ll need agent‑readable docs, safe actions, and attribution baked in.

- Security moves to the edge. The browser is now an AI runtime. Rogue extensions and risky AI sidecars can exfiltrate prompts and customer data. Lock it down first. See our org‑wide plan: 7‑Day Browser & Prompt Security.

A 7‑Day Browser‑AI Rollout (copy/paste)

Day 1 — Make 10 pages AI‑quotable

- Pick your top 10 revenue or signup pages. Add a two‑sentence TL;DR, a bulletproof “What we checked” box (dates, sources), and clear, scannable headings.

- Add or validate schema: Article/FAQ/HowTo/Product/Review where relevant. Cite primary sources with inline links and small data tables AI can lift.

- Cross‑link to pillars. Example: from your pricing page, link to comparisons and implementation guides.

- If you’re new to this, start with our AI Mode SEO plan.

Day 2 — Ship an agent‑readable docs hub

- Create

/aior/developerswith public docs: product glossary, endpoints/feeds, FAQs, and deep links to key actions (e.g.,/signup,/demo,/returns). - Expose a clean RSS and a simple JSON index of canonical pages (title, updated, summary, URL). Agents will find and quote it.

- For e‑commerce: ensure product feeds are fresh (price, availability, shipping, returns). Mark up deals and seasonal promos with structured data.

- Want to avoid agent sprawl? Consolidate capability once. See: Reusable AI Skills Library.

Day 3 — Protect your data at the browser edge

- Freeze extension installs/updates; move to an allowlist. Require managed profiles for work; block personal sync on corp devices.

- Deploy AI‑aware DLP for forms/clipboard. Add prompt banners and auto‑redaction for PII and secrets.

- Run honey‑token tests. If tokens appear in outbound logs, you have leakage. Fix before enabling browser AI features. Full sprint: follow this guide.

Day 4 — Measurement for AI surfaces

- UTM standards for AI surfaces:

utm_source=ai-browser,utm_medium=summary,utm_campaign=gemini(orfirefox-ai). - Annotate key queries and pages in Search Console. Watch for referrers from Gemini/Gemini app and new query classes.

- Set up event goals for AI deep links: book_demo, start_return, view_pricing.

Day 5 — Be “preferred source” material

- Publish a short Why you can trust us page (authorship, methods, conflicts). Link site‑wide. It improves pin‑worthiness and citation odds.

- Ship a weekly Source‑ready brief: definition + updated stat + primary link. AI layers love clear, recent facts.

- Revisit licensing posture as AI crawlers expand. If you’re evaluating pay‑to‑crawl or RSL, use this 7‑day plan.

Day 6 — Design AI‑first customer journeys

- Assume the first interaction is an AI card in the browser. Add deep links that complete tasks in 1–2 clicks (start a free trial, schedule, add to cart).

- For support: ship short, structured answers your agent can quote verbatim (policy, steps, exceptions). Add guardrails for refunds/PII.

- For SaaS: build demo and ROI calculators that can render cleanly as snippets. Include a “verify on site” link to boost trust and clicks.

Day 7 — Governance and comms

- Publish an internal policy for browser AI: which features are enabled, allowed origins for agent actions, and when human approval is required.

- Brief sales/support on new flows: how to attribute AI‑surface leads, and how to escalate sensitive queries.

- Review compliance obligations across states and platforms; align messaging with your privacy pages and app store policies.

Real‑world examples

For e‑commerce

- Query: “Find a breathable running shoe under $120 and show me returns policy.” Your product wins if your feed is fresh, your returns text is structured, and you have a short FAQ the AI can cite.

- Deep links to test:

/product/slug?ref=ai-browser,/returns?ref=ai-card, and cart adds with a preselected size/color.

For B2B SaaS

- Query: “Compare SOC 2 compliant ticketing tools with AI summarization.” Publish a table with controls, logs, and data retention—then link to your audit letter. Make it easy for the AI to quote.

- Deep links to test:

/demo?ref=gemini,/pricing?ref=firefox-ai, and a docs page that outlines your API rate limits and auth.

Common pitfalls to avoid

- Thin pages that mirror AI summaries. If your page adds no new evidence, you won’t earn a citation or a click.

- Out‑of‑date facts. AI layers de‑prioritize stale stats. Add “Last updated” stamps and keep a change log.

- Unmanaged extensions. Treat the browser like production. Start with our 7‑day browser security sprint.

The bottom line

Browser AI is the new homepage. With Firefox adding opt‑in AI and Gemini 3 Flash becoming the default in Google’s Gemini app, your growth plan should assume AI‑first discovery and agentic actions. Make your pages quotable, your feeds fresh, your analytics AI‑aware—and your browser security airtight.

Ship it faster with HireNinja

- HireNinja WordPress Blogger: turn briefs into source‑ready pages with schema, tables, and internal links.

- HireNinja Customer Support: mine real questions and generate structured answers that AI layers will cite.

- HireNinja Governance: keep “Last updated” stamps fresh, verify links monthly, and enforce page checklists before publish.

Ready to win the browser‑AI shift? Try HireNinja and launch this 7‑day rollout today.