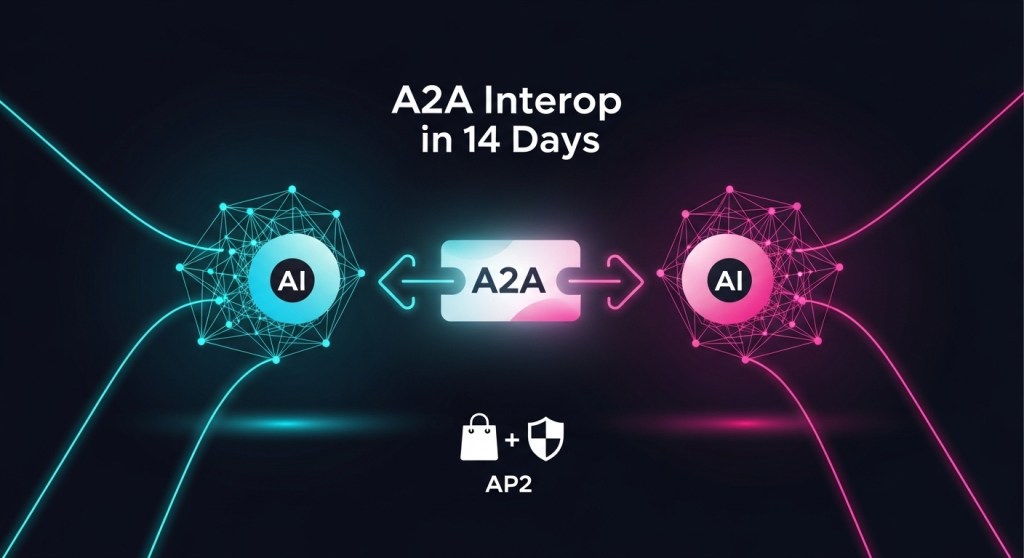

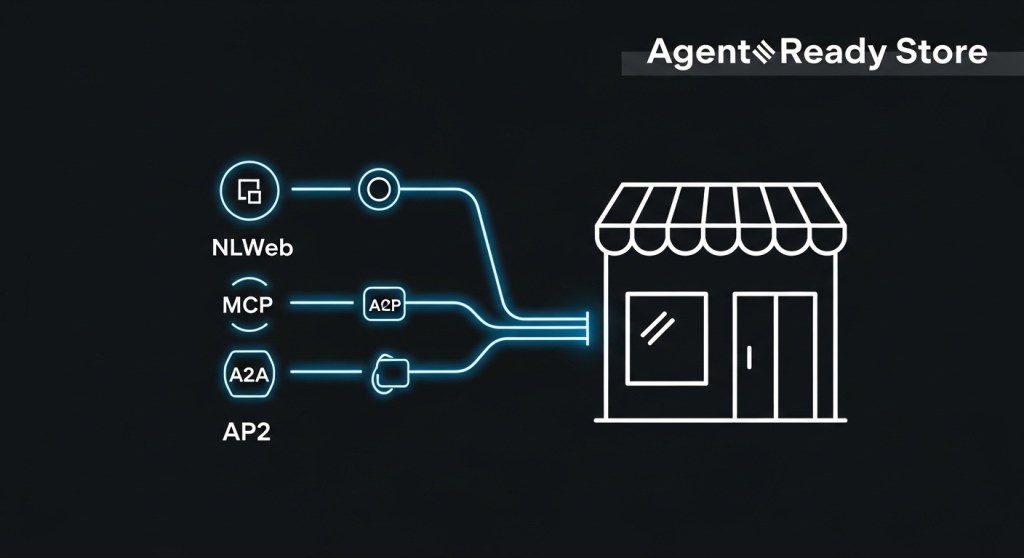

A2A Interoperability in 2025: How to Connect AgentKit, Agentforce 360, and MCP—Plus Go AP2‑Ready in 14 Days

Agent platforms and protocols matured fast this fall. OpenAI’s AgentKit made building and evaluating agents easier; Salesforce’s Agentforce 360 put enterprise agents into daily workflows; Google’s Agent2Agent (A2A) and Anthropic’s Model Context Protocol (MCP) created shared rails for agents to talk and use tools. Put them together and you can ship reliable, governed automations—and even agent‑assisted checkout—without a 6‑month project.

What each piece does (in plain English)

- A2A: An open protocol so independent agents can discover each other, exchange tasks, and collaborate securely across vendors. Spec · Docs

- MCP: A standard for connecting agents to tools, data, and systems (think: a “USB‑C for agents”). Announcement · Docs

- AgentKit: OpenAI’s toolkit (Agent Builder, ChatKit, Evals for Agents) to design, embed, and measure agent workflows. Details

- Agentforce 360: Salesforce’s enterprise agent platform that plugs into Customer 360, Slack, and Google Workspace. Coverage

- AP2 (Agent Payments Protocol): An open protocol for agent‑initiated purchases that builds on A2A/MCP to add authorization and auditability for commerce. Overview · GitHub

Reference architecture (one afternoon to whiteboard)

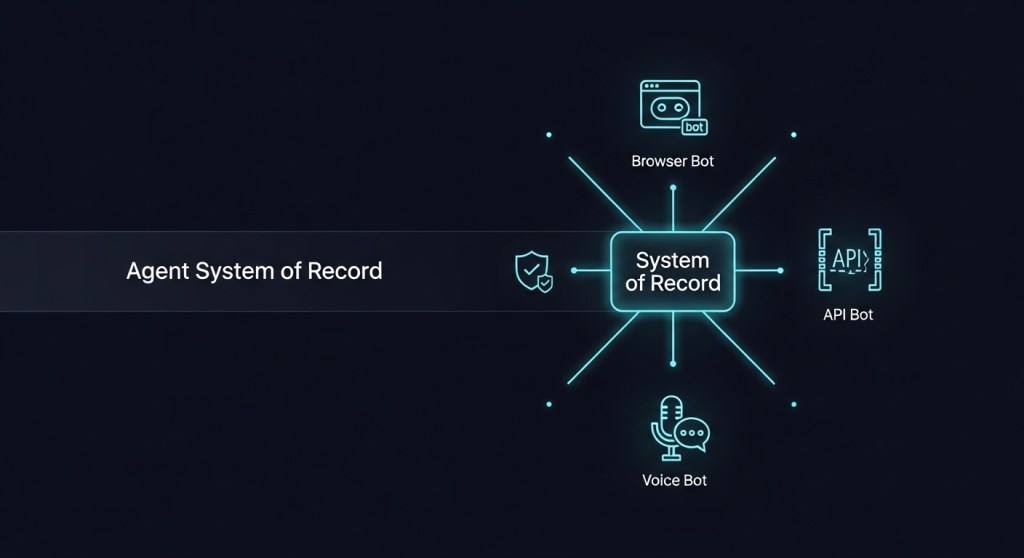

Start with a Host Orchestrator Agent (AgentKit or Agentforce) that receives the user’s goal. It delegates via A2A to specialist remote agents (pricing, catalog, support, fraud). Those specialists call tools and data through MCP servers (e.g., Postgres, Shopify Admin API, Slack, Drive). If a purchase is needed, the Host triggers an AP2 flow to obtain a cryptographic mandate, capture user intent (human‑present or not), and complete the transaction with traceability.

14‑Day interop plan

Day 1–2: Scope and guardrails

- Pick a single use case (e.g., “recover abandoned carts via email + chat + discount code”). Define success KPIs: recovery rate, AOV change, CSAT impact, human‑handoff rate.

- Decide on your Host: AgentKit (developer‑led) or Agentforce 360 (CRM‑led). Document data boundaries, PIIs, and approvals.

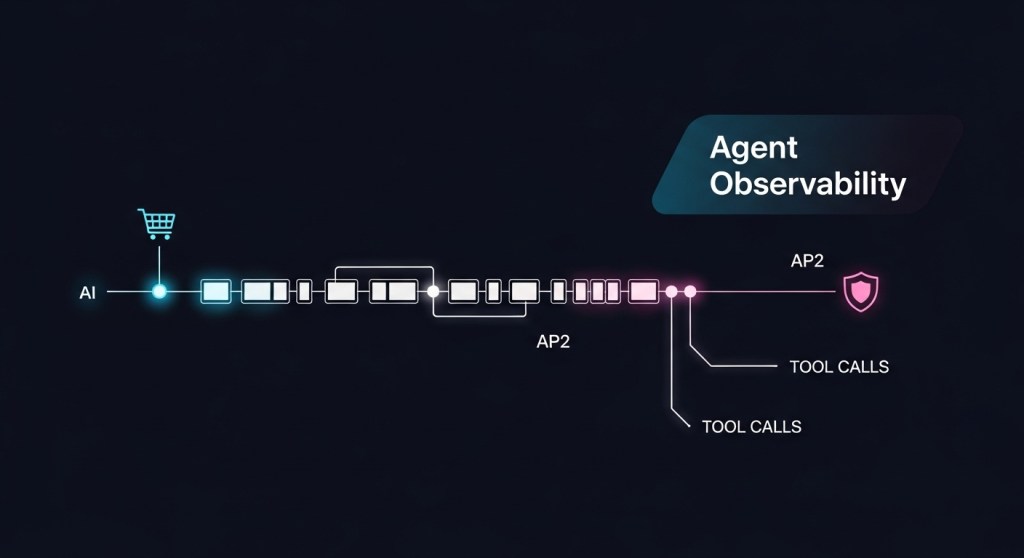

- Adopt controls from our 2025 Agent Governance Checklist and Agent Observability Blueprint.

Day 3–5: Wire up MCP for tools and data

- Stand up MCP servers for your systems of record (e.g., product DB, order history, email). Start with read‑only, then add scoped writes.

- Register MCP connectors in AgentKit (via Connector Registry) or expose them to Agentforce using your integration layer.

- Instrument OpenTelemetry traces at tool and step level; push logs to your SIEM. See our observability guide.

Day 6–8: Enable A2A multi‑agent collaboration

- Create specialist remote agents for pricing, copywriting, and support. Publish an AgentCard for each (capabilities, auth, endpoint).

- From the Host, delegate subtasks via A2A to those specialists; stream updates back to your UI (site, chat, or email).

Example AgentCard (trimmed)

{

"id": "pricing-agent",

"version": "0.2.2",

"capabilities": ["quote", "discount"],

"endpoint": "https://agents.example.com/pricing",

"auth": {"type": "bearer"}

}

Day 9–11: Go AP2‑ready for checkout

- Integrate AP2’s mandate and intent flows; start with human‑present card payment samples, then expand. AP2 docs

- Log every step (discovery → intent → authorization → settlement) to your audit lake; include mandate IDs and user presence flags.

- Add step‑up challenges for riskier scenarios (high value, address mismatch, new device).

Day 12–14: Evals, safety, and launch

- Build Evals for Agents suites in AgentKit or your test harness. Include adversarial tests inspired by Microsoft’s synthetic marketplace failure modes (e.g., hostile merchant agents, dark patterns). Study summary

- Run a controlled pilot (5–10% traffic). Define manual overrides and human‑in‑the‑loop criteria. Track ROI and safety KPIs daily.

KPIs to track from day one

- Outcome: Cart recovery rate, revenue uplift, AOV, refund rate.

- Reliability: Task success %, human‑handoff %, average steps per resolution.

- Safety/compliance: % of AP2 mandates with verified signatures, step‑up challenge rate, PII redaction coverage, incident MTTR.

- Cost: Cost per resolved task vs. human baseline; model/tool/inference spend per order.

Risk and governance: bake it in

Agent systems fail in subtle ways—over‑delegation, hallucinated confirmations, or phishing‑like prompts. Use deny‑by‑default policies for A2A tasks, require AP2 mandates for spend, and maintain full traces for every agent step. Our governance checklist pairs well with this blueprint.

Stack choices: when to pick what

- AgentKit if you need fast iteration, embedded chat UIs, and built‑in evals around your own product. AgentKit

- Agentforce 360 if your workflows and data already live in Salesforce and Slack; you want CRM‑native governance, cases, and analytics. Overview

- A2A + MCP either way to reduce vendor lock‑in and enable cross‑agent collaboration with standardized tool access. A2A · MCP

Example rollout for e‑commerce

- Host agent in AgentKit; connect catalog and orders via MCP servers.

- Expose pricing and support agents via A2A to the Host.

- Pilot AP2 human‑present card flow on checkout; require mandate for discounts over a set threshold.

- Instrument traces and ship dashboards following our observability blueprint.

What’s next

Standards will keep evolving (e.g., AP2 from v0.1 toward broader methods). Keep your agents protocol‑first, with clear guardrails, and your org will be ready for the agentic holiday season—and beyond.

Call to action: Want a hands‑on checklist, sample AgentCards, and AP2 mandate templates? Subscribe to HireNinja and we’ll send the full starter kit—or reply to get a 30‑minute architecture review.